Introduction: Media, Misinformation and the Digital Public Sphere

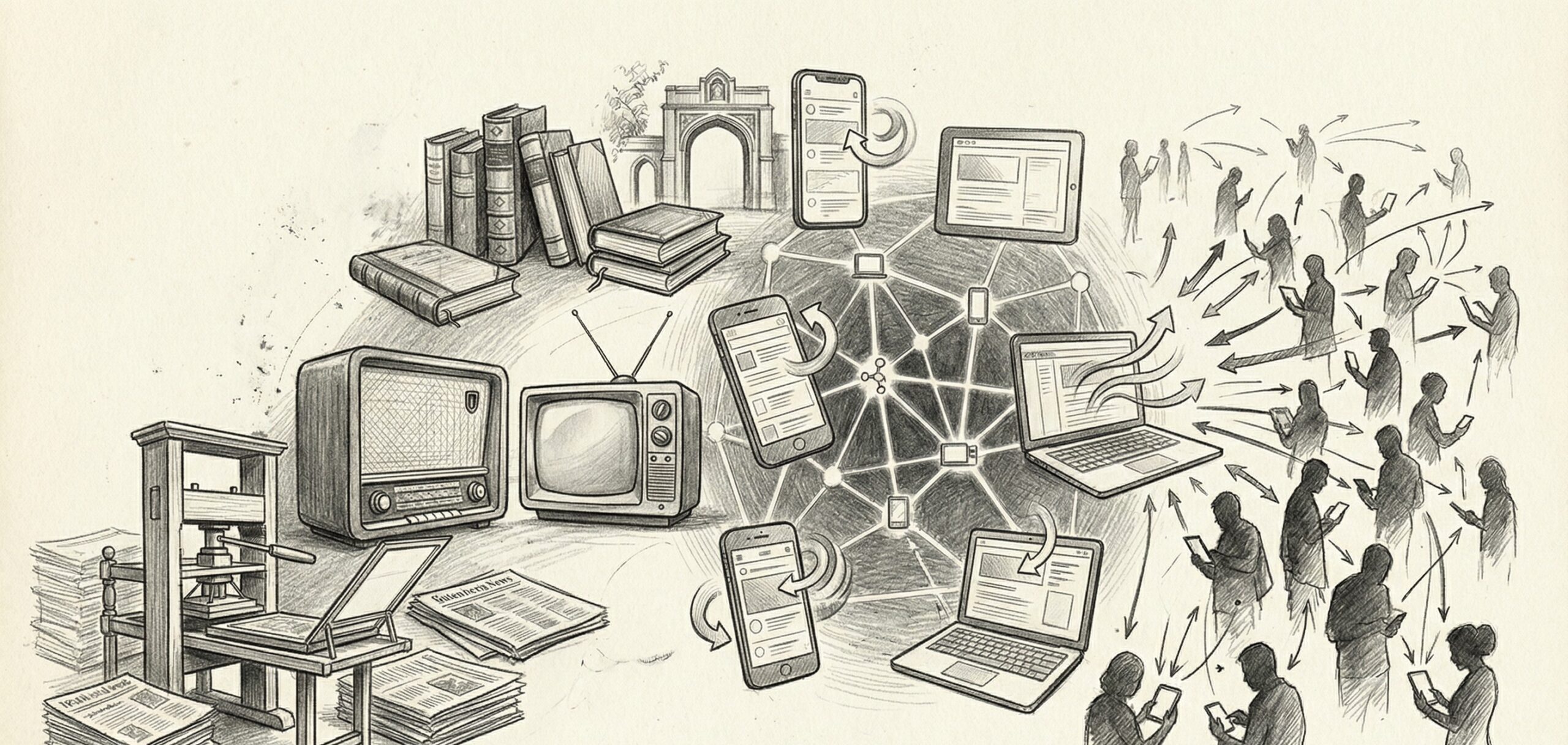

The relationship between media, misinformation and the digital public sphere has become one of the most consequential issues shaping modern democracies. The digital revolution has fundamentally transformed how information is produced, distributed, and consumed.

Social media platforms, online news outlets, video-sharing services, messaging applications, and algorithmic recommendation systems have replaced traditional gatekeepers as primary sources of public discourse.

While digital media has democratized access to information and amplified marginalized voices, it has also accelerated the spread of misinformation, disinformation, and polarizing narratives. The digital public sphere now operates at unprecedented speed and scale, raising concerns about trust, accountability, media literacy, and democratic stability.

Understanding media, misinformation and the digital public sphere requires examining technological infrastructure, platform incentives, political communication strategies, regulatory debates, and societal adaptation to digital information ecosystems.

The Evolution of the Public Sphere

Historically, public discourse occurred through:

- Print newspapers

- Broadcast television

- Radio journalism

- Academic institutions

Traditional media organizations operated under editorial standards and professional oversight.

The rise of the internet disrupted this structure.

Digital platforms enabled individuals to publish content instantly without centralized editorial control. This shift expanded participation but reduced traditional gatekeeping mechanisms.

The public sphere became decentralized, fragmented, and algorithmically mediated.

The Rise of Platform-Based Media

Social media platforms transformed information distribution.

Users now encounter news through:

- Algorithmic feeds

- Trending topics

- Influencer commentary

- Peer-shared content

Unlike traditional media, platform-based media prioritizes engagement metrics.

Content that provokes strong emotional reactions often spreads more rapidly than measured reporting.

This structural incentive environment influences information quality.

Understanding Misinformation and Disinformation

Misinformation refers to inaccurate information shared without intent to deceive.

Disinformation involves deliberate efforts to manipulate or mislead.

Digital ecosystems facilitate both forms due to:

- Rapid sharing

- Low barriers to publication

- Limited verification processes

- Anonymous accounts

False narratives can circulate widely before correction mechanisms activate.

The speed of digital communication often outpaces fact-checking efforts.

Algorithmic Amplification

Algorithms curate user content based on engagement patterns.

This may lead to:

- Echo chambers

- Filter bubbles

- Confirmation bias reinforcement

Users may encounter content aligning with existing beliefs while rarely encountering opposing perspectives.

Algorithmic opacity complicates accountability discussions.

The design of recommendation systems influences democratic discourse.

Political Communication and Information Warfare

Governments, political campaigns, and non-state actors utilize digital platforms for communication.

Digital campaigns may involve:

- Targeted advertising

- Coordinated messaging strategies

- Bot networks

- Deepfake content

Information operations can shape public perception domestically and internationally.

Geopolitical rivalry increasingly includes digital information campaigns.

The digital public sphere has become a domain of strategic competition.

Journalism in a Digital Era

Traditional journalism faces financial and structural challenges.

Advertising revenue has shifted toward digital platforms.

Local news organizations struggle with sustainability.

At the same time, digital tools enable investigative reporting and global collaboration.

Subscription models, nonprofit funding, and public broadcasting reforms seek to stabilize media ecosystems.

The future of journalism depends on adapting to platform-dominated distribution systems.

Trust and Institutional Legitimacy

Public trust in media institutions has fluctuated in many regions.

Factors influencing trust include:

- Perceived bias

- Political polarization

- Transparency of sourcing

- Media ownership concentration

Digital misinformation can erode confidence in credible institutions.

Restoring trust requires consistent standards, transparency, and media literacy initiatives.

Regulation and Platform Governance

Governments debate how to regulate digital platforms.

Policy considerations include:

- Content moderation standards

- Platform liability rules

- Transparency requirements

- Data protection regulations

Regulatory approaches vary across regions.

Some prioritize free expression; others emphasize content control and oversight.

Balancing democratic openness with harm prevention remains complex.

Media Literacy and Education

Long-term resilience against misinformation depends on education.

Media literacy programs aim to teach:

- Source verification skills

- Critical analysis techniques

- Digital content evaluation

- Fact-checking awareness

Education systems increasingly integrate digital literacy into curricula.

Empowered users contribute to healthier information ecosystems.

The Role of Artificial Intelligence

Artificial intelligence influences both misinformation production and detection.

AI systems can generate:

- Synthetic text

- Deepfake videos

- Automated social media posts

Simultaneously, AI tools assist in:

- Content moderation

- Pattern detection

- Automated fact-checking

The dual-use nature of AI complicates governance.

Technological safeguards must evolve alongside generative capabilities.

Economic Incentives and Platform Design

Digital platforms operate under profit-driven business models.

Advertising revenue often correlates with engagement volume.

Sensational or polarizing content may generate higher interaction rates.

Reforming economic incentives may influence information quality outcomes.

Debates continue regarding subscription models, public funding, and platform accountability.

Cross-Border Information Flows

The digital public sphere transcends national boundaries.

Content created in one country can influence audiences globally.

International regulatory coordination remains limited.

Jurisdictional conflicts arise regarding content removal and platform governance.

Globalization complicates enforcement mechanisms.

Civil Society and Independent Fact-Checking

Independent organizations contribute to misinformation mitigation.

Fact-checking initiatives analyze viral claims and provide context.

Civil society groups advocate for transparency and accountability.

Collaboration between platforms and independent organizations can enhance response effectiveness.

However, politicization may undermine trust in fact-checking efforts.

Digital Public Sphere and Democracy

Democratic systems depend on informed citizen participation.

When misinformation dominates discourse, electoral outcomes and policy debates may be influenced by inaccurate narratives.

However, digital platforms also enable:

- Grassroots mobilization

- Civic engagement

- Minority representation

The digital public sphere contains both empowering and destabilizing dynamics.

Effective governance seeks to maximize benefits while mitigating risks.

Risks of Over-Regulation

Excessive regulation may:

- Restrict legitimate expression

- Suppress dissent

- Concentrate power in state authorities

- Undermine open debate

Safeguarding democratic norms requires careful balance between regulation and freedom.

Pluralism remains essential to democratic resilience.

Future Outlook: Building Resilient Information Ecosystems

The future of media, misinformation and the digital public sphere will likely involve:

- Enhanced transparency requirements

- Improved AI detection systems

- Expanded digital literacy education

- International dialogue on platform governance

- Hybrid public-private accountability models

Resilient information ecosystems depend on cooperation among governments, platforms, civil society, journalists, and citizens.

Digital transformation is irreversible; adaptation is essential.

Frequently Asked Questions

What is the digital public sphere?

The digital public sphere refers to online platforms and networks where public discourse, political communication, and information exchange occur.

Why does misinformation spread so quickly online?

Low publication barriers, algorithmic amplification, emotional engagement incentives, and rapid sharing contribute to the fast spread of misinformation.

Can regulation reduce misinformation?

Regulation may improve transparency and accountability, but long-term resilience also depends on media literacy, responsible platform design, and civic engagement.

Democracy in the Information Age

Media, misinformation and the digital public sphere represent defining challenges of the information age. Digital technologies have transformed democratic participation, journalistic practice, and public discourse. While misinformation presents significant risks, digital platforms also enable unprecedented access to knowledge and civic engagement.

Sustaining democratic resilience requires adaptive governance, transparent platform policies, informed citizens, and ongoing institutional reform. The digital public sphere will continue evolving and societies must evolve with it.

Editorial Note: This article is intended for informational and educational purposes only. It provides analytical insights based on publicly available information and does not constitute financial, legal, or political advice. Readers are encouraged to consult official sources and expert advisors for verified guidance.